Archive for November, 2008

Pay For Performance, Robert Rubin Edition

| Peter Klein |

Remember, it’s not how much you pay, but how. Today’s WSJ profile of Robert Rubin provides some interesting numbers. Citigroup losses over the last year: $20 billion. US government bailout money going to Citigroup in the last month: $45 billion. Rubin’s compensation since becoming senior counselor and a director at Citigroup in 1999: $115 million. Naturally, Rubin says Citi’s near bankruptcy has nothing to do with his leadership. Critics say he encouraged the firm to increase its risk taking in 2004 and 2005. Ah well, another former Golden Boy brought down to earth. Thank goodness something positive is coming out of this mess.

Consider this today’s friendly reminder that corporate welfare is a bipartisan scam.

Update: See also Larry Ribstein.

Micro-Foundations at the Rotterdam School of Management

| Nicolai Foss |

We have blogged extensively on “micro-foundations” here on O&M (particularly in those Golden Days of O&M when the present blogger was more active). The micro-foundations theme seems to be gaining a lot of ground in management recently. About two years ago I had a paper on the subject rejected from a leading management journal because the reviewers and the editor argued that the micro-foundations theme was essentially non-controversial and scholars handled it in a pragmatic manner — i.e., there was no need to raise it as an issue. That same editor, I hear, is now actively talking about the pressing need for micro-foundations in management ;-) This reflects an increasingly widespread discourse on the subject. At the recent Strategic Management Society Conference in Köln micro-foundations were explicitly discussed in several of the PDWs, in David Teece’s keynote speech, in the keynote panel that I participated in, and in lots of paper sessions.

The work of Teppo Felin and I on the micro-foundations issue (e.g., here) has been particularly taken up with the lack of clear micro-foundations in the dominant capabilities view of the firm and strategy. Back in 2005 Teppo and I organized a two-days conference in Copenhagen on the subject. Koen Heimeriks, then an Assistant Professor at Copenhagen Business School, is organizing something like a follow-up conference at the Rotterdam School of Management where he is now an Assistant Professor. Keynote speakers are Sid Winter, Maurizio Zollo, and yours truly. Here is the homepage for Koen’s conference. Koen is a very good and meticulous conference organizer, the subject is inherently interesting, so please submit a paper!

Some Interesting Working Papers

| Peter Klein |

- Jennifer Arlen and Eric Talley, “Experimental Law and Economics”

This chapter provides a framework for assessing the contributions of experiments in Law and Economics. We identify criteria for determining the validity of an experiment and find that these criteria depend upon both the purpose of the experiment and the theory of behavior implicated by the experiment. While all experiments must satisfy the standard experimental desiderata of control, falsifiability of theory, internal consistency, external consistency and replicability, the question of whether an experiment also must be “contextually attentive” — in the sense of matching the real world choice being studied — depends on the underlying theory of decision-making being tested or implicated by the experiment.

- Matthew J. Holian, “Optimal Decentralization in Corporations and Federations”

Oates’ Theorem and the M-form Hypothesis are both organizational theories of decentralization, though they deal with different types of organizations. This brief note describes how the two theories complement one another, through both verbal description and mathematical models. The result is a simple but comprehensive account of the delegation problem.

- Abhijit V. Banerjee, Esther Duflo, “The Experimental Approach to Development Economics”

Randomized experiments have become a popular tool in development economics research, and have been the subject of a number of criticisms. This paper reviews the recent literature, and discusses the strengths and limitations of this approach in theory and in practice. We argue that the main virtue of randomized experiments is that, due to the close collaboration between researchers and implementers, they allow the estimation of parameters that it would not otherwise be possible to evaluate. We discuss the concerns that have been raised regarding experiments, and generally conclude that while they are real, they are often not specific to experiments. We conclude by discussing the relationship between theory and experiments.

Identification versus Importance

| Peter Klein |

At a recent workshop the subject of econometric identification came up. Identification is of course the major issue of our day among mainstream empirical economists. Some have described the dissertation process as the “search for a good instrument.” Instrumental-variables estimators have their critics, of course, but these critics are in the minority.

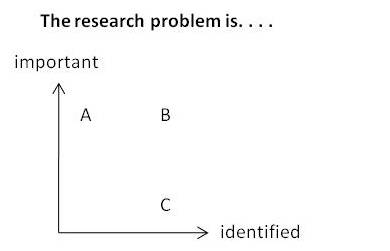

One of the workshop participants, a regular attendee at NBER events, summarized the consensus view among the elites of the profession with the following diagram:

A research problem can be important, and it can be well identified. The ideal problem is one in quadrant B, both important and identified. However, a problem in quadrant C is much more likely to be published in a top journal than a problem in quadrant A.

What does this say about the economics profession?

Hayek Speaks on Inflation and Unemployment

| Peter Klein |

Kudos to Jeff Tucker for unearthing this 1975 interview from Meet the Press. Notes Jeff:

The line of questioning he endures is hilariously naive and idiotic. We think we have a Keynesian problem now; it’s clear that these people really believe that policy makers can manipulate the economy like a machine, trading off unemployment for inflation and back again, with no trouble.

John Cochran suggests another Hayek appearance from 1975, this one a lecture at the University of Colorado (provided by Fred Glahe). Here are a few more from the Mises.org audio archive. And see also the new book.

A Note on Systems Integration

| Dick Langlois |

First let me apologize for being out of circulation for so long. I’ve been inundated with teaching and committee work this semester, but I hope to get back in the swing of things as the year winds down.

The New York Times had an interesting article the other day on a company called Super Micro Computer, a public family-run company in San Jose that puts together leading-edge servers and other hardware for clients that include eBay and Yahoo. The company sells high performance and speed, both the speed of the computer and the speed of the company in designing and delivering its products.

Whereas rivals long ago sent key design work to Asia to take advantage of cheaper, plentiful labor, Super Micro still relies on hundreds of expensive engineers working at its San Jose headquarters. These workers are charged with grabbing the latest and greatest components from suppliers and coming up with new designs months ahead of lumbering heavyweights like Hewlett-Packard and Dell.

Clayton Christensen and his coauthors have argued that a premium on high performance calls for vertical integration and systemic integration in order to fine tune and customize systems, whereas a premium on cost reduction leads to modularity, standardization, and vertical disintegration. The Super Micro case seems to question this conclusion. On the one hand, the company emphasizes design and produces customized units. On the other hand, however, the company is really just a systems integrator — not a vertically integrated company — whose advantage lies in discovering and making use of the innovation of others. In Carliss Baldwin’s phrase, the company “leverages modularity” along the performance margin in much the same way that Dell does (or at least once did) along the cost margin. My conjecture is that, the more inherently modular (whatever that means) the product is, the more systemic integration can be squeezed into a single independent stage of production (systems integration) and the less necessary is genuine vertical integration — even when performance is what matters.

Christina Romer to Head CEA

| Peter Klein |

Obama has named Christy Romer, one of my old professors, to head the Council of Economic Advisers. She’s smart, organized, a great communicator; I expect her to be highly effective. She is a moderate Keynesian, of the New Keynesian variety, best known for her revisionist work challenging the postwar Keynesian consensus view that activist monetary and fiscal policy has lessened the severity of the business cycle compared to the bad old laissez-faire days. See, for example, “Is the Stabilization of the Postwar Economy a Figment of the Data?” AER, June 1986, and “Remeasuring Business Cycles,” JEH, September 1994. A recent Journal of Economic Perspectives piece and her entry on business cycles in the Concise Encyclopedia of Economics summarizes this work. Here is more. (Of course, doubts about the effectiveness of Keynesian stabilization policy have not dampened most macroeconomists’ enthusiasm for, well, Keynesian stabilization policy.)

Obama has named Christy Romer, one of my old professors, to head the Council of Economic Advisers. She’s smart, organized, a great communicator; I expect her to be highly effective. She is a moderate Keynesian, of the New Keynesian variety, best known for her revisionist work challenging the postwar Keynesian consensus view that activist monetary and fiscal policy has lessened the severity of the business cycle compared to the bad old laissez-faire days. See, for example, “Is the Stabilization of the Postwar Economy a Figment of the Data?” AER, June 1986, and “Remeasuring Business Cycles,” JEH, September 1994. A recent Journal of Economic Perspectives piece and her entry on business cycles in the Concise Encyclopedia of Economics summarizes this work. Here is more. (Of course, doubts about the effectiveness of Keynesian stabilization policy have not dampened most macroeconomists’ enthusiasm for, well, Keynesian stabilization policy.)

I met Christy in my first year of graduate school when she co-taught, with Barry Eichengreen, my course in US economic history. I subsequently served for two semesters as her head TA in the large economics principles course (600 students, 16 TAs, one head TA, one professor — quite an operation). Berkeley had in those days a system in which the dissertation adviser (in my case, Oliver Williamson) does not serve on the dissertation proposal committee, and Christy kindly chaired the proposal committee for me, even though the topic (conglomerate diversification) was not in her general area. She is a great teacher and a great manager, careful, patient, and fair. Naturally my top choice for CEA chair would have been somone with views just like, um, mine, but of the realistic candidates Christy is an excellent choice.

What Do Boards Do and How Do They Do It?

| Peter Klein |

A new survey paper on Boards of Directors by Ben Hermalin and Mike Weisbach, updating their 2003 paper.

This paper is a survey of the literature on boards of directors, with an emphasis on research done subsequent to the Hermalin and Weisbach (2003) survey. The two questions most asked about boards are what determines their makeup and what determines their actions? These questions are fundamentally intertwined, which complicates the study of boards due to the joint endogeneity of makeup and actions. A focus of this survey is on how the literature, theoretical as well as empirically, deals – or on occasions fails to deal – with this complication. We suggest that many studies of boards can best be interpreted as joint statements about both the director-selection process and the effect of board composition on board actions and firm performance.

Don’t let James Walsh see this!

Write Like Toni Morrison

| Peter Klein |

Remember the Universal Translator? Peter Wood, in like manner, provides a useful guide to translating regular English prose into the style of Nobel-prizewinning author Toni Morrison, probably the most frequently assigned writer on US college campuses. The basic rules:

- Misuse common phrases

- Embrace inconsistency

- Omit words to create more forceful expression

- Mix up parts of speech

- Chop in self-conscious micro-sentences

He provides some wonderful examples. For instance, this office memo:

Just to remind you, I will be out of the office Tuesday to meet with our supplier, Acme Explosives. Please finish your work on the 2Q budget and let the account rep know that Mr. Coyote’s order will be shipped Thursday.

becomes

The reminding can’t wait the hurry of it. I explain. I know you know of Tuesday, I and Acme Explosives is soon together meet. You can please work, perhaps, the budget’s second quarter, and knowledge the account rep of Mr. Coyote’s Thursday shipment.

Wood also reminds us that Morrison is “the undisputed master of wandering verb tenses” and that she “knows how deftly to insert evocative foreign terms.”

But it is the anachronistic little details that are Morrison’s signature. My favorite occurs late in the book: “Ice-coated starlings clung to branches drooping with snow.” This is the 1690s, two centuries before the eccentric bird lover Eugene Schiffelin introduced starlings to the U.S. by releasing sixty of them in Central Park.

Schiffelin had no idea how the birds would proliferate, crowd out native species, and form enormous squawking, twittering, whistling flocks that seem to fill up whole forests. Starlings seem to propagate as fast as clichés and to descend like clouds of effusive blurbs on overpraised books.

Education Quote of the Day

| Peter Klein |

There is a settled mediocrity in American college teaching, surpassed here and there by talented and energetic individuals, but seldom disturbed in its languorous self-satisfaction. On most campuses, mediocrity can rightly pride itself on being a whole lot better than the conspicuous dreadfulness of a handful of professors who dedicate themselves variously to the nine muses of bad teaching: Boredom, Mumbling, Disorganization, Confusion, Forgetfulness, Stridency, PowerPoint, Textbook, and Vacuity.

That’s from Peter Wood, whose subject is actually the division of labor at many large US universities between tenured/tenure-track faculty, who do research and teach small classes to graduate and advanced undergraduate students, and the specialized, non-tenured teaching specialists who handle the bulk of the undergraduate instruction, assisted by a “small army” of graduate and undergraduate TAs. Wood points out, rightly IMHO, that one day the universities may decide that the prestige and grant dollars and other bennies generated by the research faculty isn’t worth their high salaries, perhaps choosing to go the University of Phoenix route instead.

In Praise of the US Auto Industry

| Peter Klein |

The proposed bailout of GM, Ford, and Chrysler overlooks an important fact. The US has one of the most vibrant, dynamic, and efficient automobile industries in the world. It produces several million cars, trucks, and SUVs per year, employing (in 2006) 402,800 Americans at an average salary of $63,358. That’s vehicle assembly alone; the rest of the supply chain employs even more people and generates more income. It’s an industry to be proud of. Its products are among the best in the world. Their names are Toyota, Honda, Nissan, BMW, Mercedes, Hyundai, Mazda, Mitsubishi, and Subaru.

Oh, yes, there’s also a legacy industry, based in Detroit, but it’s rapidly, and thankfully, going the way of the horse-and-buggy business.

I pulled these numbers from Matthew Slaughter’s fine piece in yesterday’s WSJ, “An Auto Bailout Would Be Terrible for Free Trade,” which points out that the US is one of the the world’s largest recipient of Foreign Direct Investment and that an auto industry bailout would surely reduce the flow of FDI, at the expense of the US economy. “Ironically, proponents of a bailout say saving Detroit is necessary to protect the U.S. manufacturing base. But too many such bailouts could erode the number of manufacturers willing to invest here.” Bailouts may also spur retaliatory actions by governments in US export markets, doing further damage to free trade. In short, what the Big Three and their supporters want is the most crass form of protectionism, a blunt demand that US taxpayers, consumers, and producers fork over the cash, now and in the future, to prop up an inefficient, failing industry.

NB: In 2001 I was part of a delegation of US officials visiting Singapore in advance of negotiations over a possible bilateral free trade agreement. The issue was Singapore’s Government-Linked Enterprises (GLCs), nominally private firms partially owned by the Singaporean government. Did these links constitute a trade barrier, putting US firms doing business in Singapore at a competitive disadvantage? We interviewed US executives based in Singapore and learned that the government did not seem to offer the GLCs special favors in input or output markets (though they did benefit from a lower cost of capital). Anyway, as I read Slaughter’s piece I imagined myself as a Singaporean official visiting the US, interviewing foreign executives in the financial-services and, perhaps, automobile industries, asking if they thought US companies got special government protection. To ask this question is to answer it.

Entrepreneurship Links

| Peter Klein |

- The World Bank’s 2008 Entrepreneurship Survey and Database. Includes over 100 countries.

- A new RAND report, Enhancing Small-Business Opportunities in the DoD. Our own Cliff Grammich is one of the authors.

- The Call for Papers for the Searle Center’s Second Annual Research Symposium on The Economics and Law of the Entrepreneur (11-12 June 2009). I attended the first one and it was really great.

Bailout Bingo

| Peter Klein |

You’re probably familiar with Buzzword Bingo. Let’s create a version to accompany news reports, editorials, and political speeches on the financial-market and other bailouts. Keep a card with you as you read the newspaper, watch or listen to broadcast news, visit with Barney Frank, or follow the conversation at your next hoity-toity dinner party. Check off each term as you hear or read it and shout “Bingo!” when you have five in a row, vertically, horizontally, or diagonally. Here’s a sample card. Please add suggestions for additional words and phrases in the comments.

| Protecting jobs | Ripple effect | Necessary intervention | Can’t afford to do nothing |

Life blood of the economy |

| Corporate greed | Market failure | Temporary relief | Decisive action | Frozen markets |

| Nonpartisan solution | Maintain competitiveness | F R E E S P A C E |

Sit idly by | Domino effect |

| No fault of their own | Stability | Public investment | Congressional authority | Too big to fail |

| Head in the sand | Conservatorship | Critical industry | Creative solutions | Public-private partnership |

Against Gladwellism

| Peter Klein |

The blogosphere is atwitter over Malcolm Gladwell’s new book, Outliers (#4 on Amazon this morning). Outliers studies high achievers in art, science, business, and other fields, seeking to refute the myth of the self-made man: high achievers “are invariably the beneficiaries of hidden advantages and extraordinary opportunities and cultural legacies that allow them to learn and work hard and make sense of the world in ways others cannot.”

Abbeville (via 3quarks) expresses some reservations, not about Gladwell’s conclusion, but about his approach:

[Gladwell] is a skilled and entertaining writer, exemplifying the modern New Yorker “house style” for journalism with its combination of solid research, amused detachment, and quirky anecdotes in the Ken Burns mold. Tragically, Gladwell is also often very wrong. His work, famous for its forays into sociology, social psychology, market research, and other trendy disciplines, is a testament to both the exciting possibilities and the intellectual limitations of those fields. His penchant for what might be called pop statistical analysis sometimes leads to elegant, well-supported, and counterintuitive conclusions, but just as often recalls the man who couldn’t possibly have drowned in that river because its average depth was five feet. (more…)

Sentences to Ponder

| Peter Klein |

Although it does very well on the share of total jobs created by new firms, America scores highest of all in terms of the percentage of its lost jobs that are destroyed by enterprises’ going out of business. Perhaps, in the spirit of Joseph Schumpeter’s theory of creative destruction, it is the ability for firms to fail — and for the entrepreneurs involved to escape without stigma — that provides the overlooked but crucial part of the American entrepreneurial culture.

That’s from an Economist story on Global Entrepreneurship Week (did you know it started Monday?) that focuses on the Kauffman Foundation’s work on collecting and analyzing global data on startups, firm growth, innovation, bankruptcy, and the like. The story opens with one of the all-time great (and probably apocryphal) Bushisms: “the problem with the French is they don’t have a word for entrepreneur.” (Thanks to Chris Boessen for the pointer.)

Alfie Kohn on Parenting

| Peter Klein |

A new item for our Alfie Kohn archive, courtesy of Joshua Gans.

One comment on the alleged crowding-out effect of extrinsic motivation. As I explain to my students, even when behavior is intrinsically motivated, extrinsic motivation can have powerful effects on the margin. For example, I didn’t go into academia for the money, but because I love research and teaching, I like keeping my own hours, I enjoy walking through leafy quads, and I look right smart in a tweed jacket with elbow patches. However, on the margin, the choice between teaching one more course or one less, attending this conference or that, working on one paper or another, is most definitely affected by monetary and other professional rewards.

Likewise, I want my children to work hard, be kind to others, eat their vegetables, clean their rooms, and so on, not because of rewards and punishments, but because those are the rights thing to do. But do I use extrinsic motivation to elicit marginal changes in behavior, subject to those general rules? You bet your Christmas Wish List I do.

Utrecht Conference on Firm Governance

| Peter Klein |

Utrecht University is sponsoring a conference on “The Governance of the Modern Firm,” 11-13 December 2008, featuring contributions from Paul Davies, Roberta Romano, Bill Lazonick, and many others. (Via Geoff Hodgson.)

Entrepreneurship: Hot and Cold

| Peter Klein |

The current issue of Nature features “The Innovative Brain” by a team of Cambridge researchers, suggesting that that entrepreneurs (defined here as proprietors or founders) excel at “hot” decision making, an emotional, intuitive, under-pressure kind of reasoning familiar to neuroscientists.

The current issue of Nature features “The Innovative Brain” by a team of Cambridge researchers, suggesting that that entrepreneurs (defined here as proprietors or founders) excel at “hot” decision making, an emotional, intuitive, under-pressure kind of reasoning familiar to neuroscientists.

Our groups of entrepreneurs and managers showed comparable performance on the Tower of London test of cold processes, with no differences in the number of solutions solved at the first attempt. On the Cambridge Gamble Task, both groups were able to make high-quality decisions, selecting the majority colour at least 95% of the time. The remaining 5% is likely to be accounted for by ‘gambler’s fallacy’ in which subjects try to second guess the computer by choosing the less likely colour. However, when subjects were introduced to the hot components of the task, differences were observed. We found that entrepreneurs behaved in a significantly riskier way, betting a greater percentage of their accrued points (63%) than their managerial counterparts (51%). Both groups adjusted their wagering according to the likelihood of success (dependent on the ratio of red-to-blue boxes). The only performance difference was the amount that was bet.

Interestingly, this risk-taking performance in the entrepreneurs was accompanied by elevated scores on personality impulsiveness measures and superior cognitive-flexibility performance. We conclude that entrepreneurs and managers do equally well when asked to perform cold decision-making tasks, but differences emerge in the context of risky or emotional decisions.

The researchers suggest that entrepreneurship education should perhaps give more attention to emotional, impulsive behavior rather than teach business planning and market research. (But, really, do 18-22 year olds need instruction in this area?) And here’s something to delight your local pharmacist:

These cognitive processes are intimately linked to brain neurochemistry, particularly to the neurotransmitter dopamine. Using single-dose psychostimulants to manipulate dopamine levels, we have seen modulation of risky decision-making on this task. Therefore, it might be possible to enhance entrepreneurship pharmacologically.

The exercise is a bit scientistic for my tastes, but interesting nonetheless. (Thanks to Yiyong Yuan for the pointer.)

All Your Firm Are Belong to Us

| Peter Klein |

Time for a little O&M poll. Which US firms or industries will be bailed out and/or nationalized next? This year we’ve had investment banks, commercial banks, insurance companies, and (coming soon) the automobile industry. Who’s next? Another financial-sector player, like student-loan packagers and resellers? More insurance companies? The steel industry? Food processing? Healthcare? Boatmakers? Perhaps professional service firms? How about Olympics organizers? Take a guess, and feel free to provide additional suggestions too in the poll or in the comments. We’re pretty far down the slippery slope; what do you think the rest of the journey will look like?

Creative Capitalism

| Peter Klein |

The book is coming out in a few weeks, and the blog is back in business. I didn’t follow all the previous discussion but what I read was of high quality and reflected diverse, and interesting, perspectives.

Recent Comments