Posts filed under ‘Methods/Methodology/Theory of Science’

SMACK-down of Evidence-Based Medicine

| Peter Klein |

As a skeptic of the evidence-based management movement (championed by Pfeffer, Sutton, et al.) I was amused by a recent spoof article in the Journal of Evaluation in Clinical Practice, “Maternal Kisses Are Not Effective in Alleviating Minor Childhood Injuries (Boo-Boos): A Randomized, Controlled, and Blinded Study,” authored by the Study of Maternal and Child Kissing (SMACK) Working Group. Maternal kisses were associated with a positive and statistically significant increase in the Toddler Discomfort Index (TDI):

As a skeptic of the evidence-based management movement (championed by Pfeffer, Sutton, et al.) I was amused by a recent spoof article in the Journal of Evaluation in Clinical Practice, “Maternal Kisses Are Not Effective in Alleviating Minor Childhood Injuries (Boo-Boos): A Randomized, Controlled, and Blinded Study,” authored by the Study of Maternal and Child Kissing (SMACK) Working Group. Maternal kisses were associated with a positive and statistically significant increase in the Toddler Discomfort Index (TDI):

Maternal kissing of boo-boos confers no benefit on children with minor traumatic injuries compared to both no intervention and sham kissing. In fact, children in the maternal kissing group were significantly more distressed at 5 minutes than were children in the no intervention group. The practice of maternal kissing of boo-boos is not supported by the evidence and we recommend a moratorium on the practice.

The actual author, Mark Tonelli, is a prominent critic of evidence-based medicine, described by the journal’s editor as a “collapsing” movement and in a recent British Journal of Medicine editorial as a “movement in crisis.” Most of the criticisms of evidence-based medicine will sound familiar to Austrian economists: overreliance on statistically significant, but clinically irrelevant, findings in large samples; failure to appreciate context and interpretation; lack of attention to underlying mechanisms rather than unexplained correlations; and a general disdain for tacit knowledge and understanding.

My guess is that evidence-based management, which is modeled after evidence-based medicine, is in for a similarly rocky ride. Teppo had some interesting orgtheory posts on this a few years ago (e.g., here and here). Evidence-based management has been criticized, as you might expect, by critical theorists and other postmodernists who don’t like the concept of “evidence” per se but the real problems are more mundane: what counts as evidence, and what conclusions can legitimately be drawn from this evidence, are far from obvious in most cases. Particularly in entrepreneurial settings, as we’ve written often on these pages, intuition, Verstehen, or judgment may be more reliable guides than quantitative, analytical reasoning.

Update: Thanks to Ivan Zupic for pointing me to a review and critique of EBM in the current issue of AMLE.

Azoulay on Star Scientists

| Peter Klein |

Pierre Azoulay has written a number of important and interesting papers on the economics and sociology of science: How does teamwork effect science? What are the relationships among scientists and students, collaborators, and rivals? A new paper with Christian Fons-Rosen, Joshua S. Graff Zivin looks at the unexpected death of a “star” scientist to identify the (exogenous) impact of the star’s research on her field. The main result — that stars matter — is perhaps not surprising, but the magnitude of the effect is remarkable.

Pierre Azoulay has written a number of important and interesting papers on the economics and sociology of science: How does teamwork effect science? What are the relationships among scientists and students, collaborators, and rivals? A new paper with Christian Fons-Rosen, Joshua S. Graff Zivin looks at the unexpected death of a “star” scientist to identify the (exogenous) impact of the star’s research on her field. The main result — that stars matter — is perhaps not surprising, but the magnitude of the effect is remarkable.

Consistent with previous research, the flow of articles by collaborators into affected fields decreases precipitously after the death of a star scientist (relative to control fields). In contrast, we find that the flow of articles by non-collaborators increases by 8% on average. These additional contributions are disproportionately likely to be highly cited. They are also more likely to be authored by scientists who were not previously active in the deceased superstar’s field. Overall, these results suggest that outsiders are reluctant to challenge leadership within a field when the star is alive and that a number of barriers may constrain entry even after she is gone.

Read the whole thing, as well as related work by Toby Stuart, Joshua Graff Zivin, and others.

Update: Here is a non-technical summary on Vox.com.

Can Junior Scholars Do Risky Research?

| Peter Klein |

The AOM’s Entrepreneurship Division listserv has been featuring an interesting discussion on the incentives facing junior (and senior) scholars for doing “high-risk” research. To be sure, most early-career scholars focus on making incremental contributions to well-established research programs; after securing tenure, the argument goes, they can be bolder and more experimental. The problem is that, in many academic fields, junior scholars have the greatest capacity for novelty and creativity (in mathematics, for example, you may be past your prime at 35). I’m not sure this true in the social sciences, which may place too much emphasis on clever technique over mature reasoning. But certainly many academics worry that the need to publish or perish makes it difficult for junior scholars to take chances, to the detriment of scientific progress.

I really liked Jeff McMullen‘s comments on the problem, reproduced here with permission:

Dean Shepherd and I wrote a paper about this issue several years ago, which grappled with some of these issues, especially what “risky research” means to tenure track researchers. Here’s the reference:

McMullen, J. S., & Shepherd, D. A. (2006). Encouraging Consensus‐Challenging Research in Universities. Journal of Management Studies, 43(8), 1643-1669.

I wanted to write that paper because I was starting off my career and wanted to do consensus-challenging research, but I also wanted to understand the consequences of employing such a career strategy. Much of what Dean and I discovered in that research has only intensified over the years as competitive pressures have made institutional incentives that much more uniform.

The challenge for me personally, however, is not the incentives and institutional pressures; instead, it is having the moral courage to conduct research that I believe is important and valuable even though I know the academy may not yet value it, at least not yet. Will I be able to meet the high productivity bar of my colleagues whose research or approach is more mainstream? Some of us are drawn to topics that are mainstream (count your blessings you lucky dogs), but some of us just have to let our freak flags fly. What is the cost of doing research we care about and do we have the courage to pay this price?

Like other innovations, consensus-challenging research is uncertain. Just like routine must be the norm for innovation to mean anything, incremental, consensus building research has to be the norm for any notion of uncertain, consensus-challenging research to make sense. Sometimes uncertainty bearing pays off economically, but more often it does not. Therefore, uncertain payoffs are likely to be motivated by incentives that are not economic — e.g., intrinsic motivation such as intellectual curiosity or feeling like we have said something original if that’s even possible. Perhaps, this is how it should be.

So, the real question for me is and has been through much of my career: how much is it worth to me in terms of institutional status, job security, promotion, or raises to forgo incremental publications and the accolades that come with those to write papers I care about? What is the optimal blend that I might stay employed yet truly care deeply about what I write? Can I live with socio-emotional costs of not being as productive as my colleagues?

For the most part, I have been blessed to be surrounded by colleagues who have valued me and what I do, but I also sought to work for institutions and with colleagues who I believed valued what I valued or at least had that capacity.

Can the system be better? Absolutely, it could be more forgiving. We could lower the institutional costs of innovative research. But, the system only has as much power as you and I choose to give it over our hearts and minds. Great leaders throughout history ranging from Jesus to Gandhi to King to Mandela have confronted a similar choice between compliance and civil disobedience and have had the moral courage to choose civil disobedience despite consequences that dwarf what you and I face. Changing the system starts first with having the moral courage to make peace with the worst possible outcome and yet still having the conviction to advance what we believe in.

So, let us ask what we might change “out there” to make science more inclusive, but let us not forget to ask what we need to change in ourselves. Like the entrepreneurs we study, meaningful work has a price, and may only be meaningful because it does.

Incentives, Ideology, and Climate Change

| Peter Klein |

We’ve written before on the institutions of scientific research which, like other human activities, involves expenditures of scarce resources, has benefits and costs that can be evaluated on the margin, and is affected by the preferences, beliefs, and incentives of scientific personnel (1, 2, 3). This sounds trite, but the view persists, especially among mainstream journalists, that science is fundamentally different, that scientists are disinterested truth-seekers immune from institutional and organizational constraints. This is the default assumption about scientists working within the general consensus of their discipline. By contrast, critics of the consensus position, whether inside our outside the core discipline, are presumed to be motivated by ideology or private interest.

We’ve written before on the institutions of scientific research which, like other human activities, involves expenditures of scarce resources, has benefits and costs that can be evaluated on the margin, and is affected by the preferences, beliefs, and incentives of scientific personnel (1, 2, 3). This sounds trite, but the view persists, especially among mainstream journalists, that science is fundamentally different, that scientists are disinterested truth-seekers immune from institutional and organizational constraints. This is the default assumption about scientists working within the general consensus of their discipline. By contrast, critics of the consensus position, whether inside our outside the core discipline, are presumed to be motivated by ideology or private interest.

You don’t need to be Thomas Kuhn, Imre Lakatos, or any modern historian or philosopher of science to find this asymmetry puzzling. But it is the usual assumption in particular areas, most notably climate science. A good example is this recent New York Times piece by Justin Gillis, “Short Answers to Hard Questions About Climate Change.” In response to the question, “Why do people question climate change?” Gillis gives us ideology and private interests.

Most of the attacks on climate science are coming from libertarians and other political conservatives who do not like the policies that have been proposed to fight global warming. Instead of negotiating over those policies and trying to make them more subject to free-market principles, they have taken the approach of blocking them by trying to undermine the science.

This ideological position has been propped up by money from fossil-fuel interests, which have paid to create organizations, fund conferences and the like. The scientific arguments made by these groups usually involve cherry-picking data, such as focusing on short-term blips in the temperature record or in sea ice, while ignoring the long-term trends.

Ignore the saucy rhetoric (critics of the consensus view don’t just question the theory or evidence, they “attack climate science”), and note that for Gillis, opposition to the mainstream view is a puzzle to be explained, and the most likely candidates are ideology and special interests. Honest disagreement is ruled out (though earlier in the piece he recognizes the vast uncertainties involved in climate research). Why so many scientists, private and public organizations, firms, etc. support the mainstream position is not, in Gillis’s opinion, worth exploring. It’s Because Science. The fact that billions of dollars are flowing into climate research — a flow that would slow to a trickle if policymakers believed that man-made carbon emissions are not contributing to global warming — apparently has no effect on scientific practice. The fact that many climate-change proponents are, in general, ideologically predisposed to policies that impose greater government control over markets, that reduce industrial activity, that favor particular technologies and products over others is, again irrelevant.

Of course, I’m not claiming that climate scientists in or outside the mainstream consensus are fanatics or money-grubbers. I’m saying you can’t have it both ways. If ideology and private interests are relevant on one side of a debate, they’re relevant on the other side as well. Perhaps the ideology and private interests of New York Times writers blind them to this simple point.

Weingast on North

| Peter Klein |

Barry Weingast remembers Doug North at EH.Net (also at the SIOE blog):

His first book, The Economic Growth of the United States, 1790-1860 (1960), helped foster the revolution that came to be known as the “new economic history,” the application of frontier economics to the study problems of the past. He and Bob Fogel were awarded the Nobel Prize in Economics (1993) largely for their leadership in this new research program.

But Doug understood that the neoclassical economics on which he was raised was inadequate to address the problems he sought to answer, namely, why are a few countries rich while most remain poor, some in dire poverty? Much of his best work addressed this question.

Read the whole thing here.

Deaton’s Critique of Randomized Controlled Trials

| Peter Klein |

Because we’ve been somewhat skeptical of randomized-controlled trials — not the technique itself, but the way it is over-hyped by its proponents — you may enjoy Angus Deaton’s critique of RCTs in development economics. I learned of Deaton’s arguments from this excellent piece by Chris Blattman in Foreign Policy. Here is the key paper, Deaton’s 2008 Keynes Lecture at the British Academy.

Instruments of Development: Randomization in the Tropics, and the Search for the Elusive Keys to Economic Development

Angus Deaton

There is currently much debate about the effectiveness of foreign aid and about what kind of projects can engender economic development. There is skepticism about the ability of econometric analysis to resolve these issues, or of development agencies to learn from their own experience. In response, there is movement in development economics towards the use of randomized controlled trials (RCTs) to accumulate credible knowledge of what works, without over-reliance on questionable theory or statistical methods. When RCTs are not possible, this movement advocates quasi-randomization through instrumental variable (IV) techniques or natural experiments. I argue that many of these applications are unlikely to recover quantities that are useful for policy or understanding: two key issues are the misunderstanding of exogeneity, and the handling of heterogeneity. I illustrate from the literature on aid and growth. Actual randomization faces similar problems as quasi-randomization, notwithstanding rhetoric to the contrary. I argue that experiments have no special ability to produce more credible knowledge than other methods, and that actual experiments are frequently subject to practical problems that undermine any claims to statistical or epistemic superiority. I illustrate using prominent experiments in development. As with IV methods, RCT-based evaluation of projects is unlikely to lead to scientific progress in the understanding of economic development. I welcome recent trends in development experimentation away from the evaluation of projects and towards the evaluation of theoretical mechanisms.

Blattman says Deaton has a new paper that presents a more nuanced critique, but it is apparently not online. I’ll share more when I have it.

I Agree with Larry Summers

| Peter Klein |

Justin Fox reports on a recent high-powered behavioral economics conference featuring Raj Chetty, David Laibson, Antoinette Schoar, Maya Shankar, and other important contributors to this growing research stream. But he refers also to the “Summers critique,” the idea that key findings in behavioral economics research sound like recycled wisdom from business practitioners.

Summers [in 2012] told a story about a college acquaintance who as a cruel prank signed up another classmate for 60 different subscriptions of the Book-of-the-Month-Club ilk. The way these clubs worked is that once you signed up, you got a book in the mail every month and were charged for it unless you (a) sent the book back within a certain period of time or (b) went through the hassle of extricating yourself from the club altogether. Customers had to opt out in order to not keep buying books, so they bought more books than they otherwise would have. Book marketers, Summers said, had figured out the power of defaults long before economists had.

More generally, Fox asks, “Have behavioral economists really discovered anything new, or have they simply replaced some wrong-headed notions of post-World War II economics with insights that people in business have understood for decades and maybe even centuries?”

I took exactly the Summers line in a 2010 post, observing that behavioral economics “often seems to restate common, obvious, well-known ideas as if they are really novel insights (e.g., that preferences aren’t stable and predictable over time). More novel propositions are questionable at best.” I used a Dan Ariely column on compensation policy as an example:

He claims as a unique insight of behavioral economics that when people are evaluated according to quantitative measures of performance, they tend to focus on the measures, not the underlying behavior being measured. Well, duh. This is pretty much a staple of introductory lectures on agency theory (and features prominently in Steve Kerr’s classic 1975 article). Ariely goes on to suggest that CEOs should be rewarded not on the basis of a single measure of performance, but multiple measures. Double-duh. Holmström (1979) called this the “informativeness principle” and it’s in all the standard textbooks on contract design and compensation structure (e.g., Milgrom and Roberts, Brickley et al., etc.) (Of course, agency theory also recognizes that gathering information is costly, and that additional metrics are valuable, on the margin, only if the benefits exceed the costs, a point unmentioned by Ariely.)

Maybe Larry and I should hang out.

Henry Manne Quote of the Day

| Peter Klein |

This is actually Richard Epstein writing about Henry Manne, but Richard nicely captures the essence of Henry’s thinking:

The combination of law and economics is a major discipline in … modern law schools, but I do not think that it was always presented to Henry’s liking. In his view, the student’s purpose was to show the power of markets to overcome key problems of information and coordination, not to run a set of exhaustive empirical studies to show that corporate boards would function better if they increased their number of independent directors by 5 percent.

Other Manne items on O&M are here. As I noted in another post, Manne was expert in specific technical areas of law (most obviously, insider trading and corporate takeovers) but very much a generalist in his overall outlook. As Manne once recalled about a 1962 seminar led by Armen Alchian, “All of a sudden, everything that I had done intellectually for thirteen years came together, with this one idea of Alchian’s about the real nature of property rights and the Misesian notion of people making choices, with every choice being a tradeoff,” In other words, a simple and powerful theoretical framework goes a long way in analyzing a broad range of issues — much different from today’s emphasis on behavioral quirks, clever experiments, and similar minutiae.

Casson on Methodological Individualism

| Peter Klein |

Thanks to Andrew for the pointer to this weekend’s Reading-UNCTAD International Business Conference featuring Mark Casson, Tim Devinney, Marcus Larsen, and many others. Mark’s talk (not yet online) focused on the need for methodological individualism in international business research. “Firms don’t take decisions, individuals do. When you say that a firm pursued an international strategy, you really mean that that the CEO persuaded the individuals on the board to go along with his or her strategy.” As Andrew summarizes:

Thanks to Andrew for the pointer to this weekend’s Reading-UNCTAD International Business Conference featuring Mark Casson, Tim Devinney, Marcus Larsen, and many others. Mark’s talk (not yet online) focused on the need for methodological individualism in international business research. “Firms don’t take decisions, individuals do. When you say that a firm pursued an international strategy, you really mean that that the CEO persuaded the individuals on the board to go along with his or her strategy.” As Andrew summarizes:

Casson spoke at great length about the need for research that focuses on named individuals, is based on the extensive study of primary sources in archives, takes social and political context into account, and which looks at case studies of entrepreneurs in different time periods. In effect, he was calling for the re-integration of Business History into International Business research.

And a renewed emphasis on entrepreneurship, not as a standalone subject dealing with startups or self-employment, but as central to the study of organizations — a theme heartily endorsed on this blog.

Sleeping Beauties

| Peter Klein |

Quick, what do the following articles have in common?

- Maslow, Abraham. 1943. “A theory of human motivation.” Psychological Review 50(4): 370-376.

- Forrester, Jay W. 1958. “Industrial dynamics: a major breakthrough for decision makers.” Harvard Business Review 36(4): 37-66.

- Fisher, Irving. 1933. “The debt-deflation theory of great depressions.”

Econometrica 1(4): 337-357. - Fornell, Claes, and David F. Larker. 1981. “Evaluating structural equation models with unobservable variables and measurement error.” Journal of Marketing Research 18(1): 39-50.

- Wechsler, Herbert. 1959. “Toward neutral principles of constitutional law.” Harvard Law Review 73(1): 1-35.

- Ellsberg, Daniel. 1961. “Risk, ambiguity, and the savage axioms.” Quarterly Journal of Economics 75(4): 643-669.

All are designated as “sleeping beauties,” papers that lie dormant for years after publication, then suddenly become highly influential. The term was coined by Anthony van Raan, but sleeping beauties were thought to be rare. A new paper in PNAS by Qing Ke, Emilio Ferrara, Filippo Radicchi, and Alessandro Flammini finds, by contrast, that sleeping beauties are fairly common. Formally, “The beauty coefficient value B for a given paper is based on the comparison between its citation history and a reference line that is determined only by its publication year, the maximum number of citations

All are designated as “sleeping beauties,” papers that lie dormant for years after publication, then suddenly become highly influential. The term was coined by Anthony van Raan, but sleeping beauties were thought to be rare. A new paper in PNAS by Qing Ke, Emilio Ferrara, Filippo Radicchi, and Alessandro Flammini finds, by contrast, that sleeping beauties are fairly common. Formally, “The beauty coefficient value B for a given paper is based on the comparison between its citation history and a reference line that is determined only by its publication year, the maximum number of citations

received in a year (within a multiyear observation period), and the year when such maximum is achieved.” The authors take a large sample of papers from the American Physical Society and Web of Science and identify, describe, and analyze some prominent sleeping beauties. They focus mostly on the physical science, but include a few social science datasets in an online appendix, finding several papers including those above. (Most of the sleeping beauties in their social science sample are either experimental psychology papers or statistical or methodological papers that are not really about core social science theory or application.) I assume the social science papers also come from Web of Science, which may not include journals like Economica (hence no Coase 1937), and hence the list above is not totally intuitive.

Anyway, this should provoke some interesting discussion about the diffusion of knowledge. The presence of sleeping beauties could simply mean that some discoveries are difficult to understand and take a while to be appreciated, but could also reflect bandwagon effects, faddish citation practices, and other phenomena that cast doubt on the whig theory of science.

Schumpeterian Recombination and Scientific Progress

| Peter Klein |

Scientific progress, like economic progress, largely consists of combining and recombining existing resources and knowledge. At least that’s the way I interpret a new paper from Santa Fe Institute researchers Hyejin Youn, Luis Bettencourt, Jose Lobo, and Deborah Strumsky, “Invention as a Combinatorial Process: Evidence from US Patents” (via Steve Fiore):

Invention has been commonly conceptualized as a search over a space of combinatorial possibilities. Despite the existence of a rich literature, spanning a variety of disciplines, elaborating on the recombinant nature of invention, we lack a formal and quantitative characterization of the combinatorial process underpinning inventive activity. Here, we use US patent records dating from 1790 to 2010 to formally characterize invention as a combinatorial process. To do this, we treat patented inventions as carriers of technologies and avail ourselves of the elaborate system of technology codes used by the United States Patent and Trademark Office to classify the technologies responsible for an invention’s novelty. We find that the combinatorial inventive process exhibits an invariant rate of ‘exploitation’ (refinements of existing combinations of technologies) and ‘exploration’ (the development of new technological combinations). This combinatorial dynamic contrasts sharply with the creation of new technological capabilities—the building blocks to be combined—that has significantly slowed down. We also find that, notwithstanding the very reduced rate at which new technologies are introduced, the generation of novel technological combinations engenders a practically infinite space of technological configurations.

Or, as the Santa Fe press release puts it, “Most new patents are combinations of existing ideas and pretty much always have been, even as the stream of fundamentally new core technologies has slowed.” See also the authors’ earlier paper, “Atypical Combinations and Scientific Impact.”

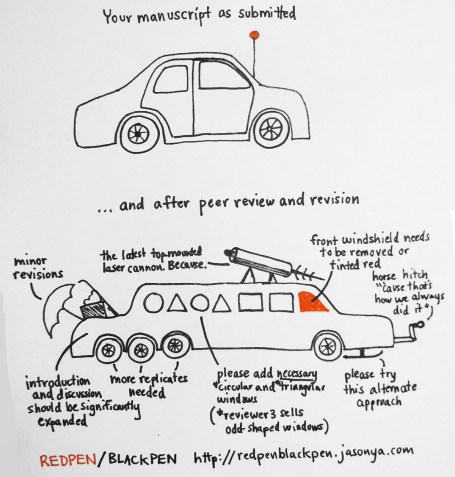

Peer Review in One Picture

| Peter Klein |

Great illustration from the Mad Scientist Confectioner’s Club (via Fan Xia).

Is Economic History Dead?

| Peter Klein |

An interesting piece in The Economist: “Economic history is dead; long live economic history?”

Last weekend, Britain’s Economic History Society hosted its annual three-day conference in Telford, attempting to show the subject was still alive and kicking. The economic historians present at the gathering were bullish about the future. Although the subject’s woes at MIT have been echoed across research universities in both America and Europe, since the financial crisis there has been something of a minor revival. One reason for this may be that, as we pointed out in 2013, it is widely believed amongst scholars, policy makers and the public that a better understanding of economic history would have helped to avoid the worst of the recent crisis.

However, renewed vigour can be most clearly seen in the debates economists are now having with each other.

These debates are those about the long-run relationship between debt and growth initiated by Reinhart and Rogoff, about the historic effectiveness of Keynesian monetary and fiscal policy, and about the role of global organizations like the IMF and World Bank in promoting international coordination.

I guess my view is closer to Andrew Smith’s, that while history should play a stronger role in economics (and management) research and teaching, it probably won’t, for a variety of professional and institutional reasons. Of course, there is a difference between, say, research in economic or business history and “papers published in journals specializing in economic or business history.” In the first half of the twentieth century, quantitative economics was treated as a specialized subfield; now virtually all mainstream economics is quantitative. (The same may happen to empirical sociology, to theorizing in strategic management, and in other areas.)

Are “Private” Universities Really Private?

| Peter Klein |

Jeffrey Selingo raises an important point about the distinction between “public” and “private” universities, but I disagree with his analysis and recommendation. Selingo points out that the elite private universities have huge endowments and land holdings, the income from which, because of the universities’ nonprofit status, is untaxed. He calls this an implicit subsidy, worth billions of dollars according to this study. “Such benefits account for $41,000 in hidden taxpayer subsidies per student annually, on average, at the top 10 wealthiest private universities. That’s more than three times the direct appropriations public universities in the same states as those schools get.”

I agree that the distinction between public and private universities is blurry, but not for the reasons Selingo gives. First, a tax break is not a “subsidy.” Second, there are many ways to measure the “private-ness” of an organization — not only budget, but also ownership and governance. In terms of governance, most US public universities look like crony capitalists. The University of Missouri’s Board of Curators consists of a handful of powerful local operatives, all political appointees (and all but one lawyers) and friends of the current and previous governors. At some levels, there is faculty governance, as there is at nominally private universities. In terms of budget, we don’t need to invent hidden subsidies, we need only look at the explicit ones. If we include federal research funding, the top private universities get a much larger share of their total operating budgets from government sources than do the mid-tier public research universities. (I recently read that Johns Hopkins gets 90% of its research budget from federal agencies, mostly NIH and NSF.) And of course federal student aid is relevant too.

So, what does it mean to be a “private” university?

Kealey and Ricketts on Science as a Contribution Good

| Peter Klein |

Two of my favorite writers on the economic organization of science, Terence Kealey and Martin Ricketts, have produced a recent paper on science as a “contribution good.” A contribution good is like a club good in that it is non-rivalrous but at least partly excludable. Here, the excludability is soft and tacit, resulting not from fixed barriers like membership fees, but from the inherent cognitive difficulty in processing the information. To join the club, one must be able to understand the science. And, as with Mancur Olson’s famous model, consumption is tied to contribution — to make full use of the science, the user must first master the underlying material, which typically involves becoming a scientist, and hence contributing to the science itself.

Kealey and Ricketts provide a formal model of contribution goods and describe some conditions favoring their production. In their approach, the key issue isn’t free-riding, but critical mass (what they call the “visible college,” as distinguished from additional contributions from the “invisible college”).

The paper is in the July 2014 issue of Research Policy and appears to be open-access, at least for the moment.

Modelling science as a contribution good

Terence Kealey, Martin RickettsThe non-rivalness of scientific knowledge has traditionally underpinned its status as a public good. In contrast we model science as a contribution game in which spillovers differentially benefit contributors over non-contributors. This turns the game of science from a prisoner’s dilemma into a game of ‘pure coordination’, and from a ‘public good’ into a ‘contribution good’. It redirects attention from the ‘free riding’ problem to the ‘critical mass’ problem. The ‘contribution good’ specification suggests several areas for further research in the new economics of science and provides a modified analytical framework for approaching public policy.

Benefits of Academic Blogging

| Peter Klein |

I sometimes worry that the blog format is being displaced by Facebook, Twitter, and similar platforms, but Patrick Dunleavy from the LSE Impact of Social Science Blog remains a fan of academic blogs, particularly focused group blogs like, ahem, O&M. Patrick argues that blogging (supported by academic tweeting) is “quick to do in real time”; “communicates bottom-line results and ‘take aways’ in clear language, yet with due regard to methods issues and quality of evidence”; helps “create multi-disciplinary understanding and the joining-up of previously siloed knowledge”; “creates a vastly enlarged foundation for the development of ‘bridging’ academics, with real inter-disciplinary competences”; and “can also support in a novel and stimulating way the traditional role of a university as an agent of ‘local integration’ across multiple disciplines.”

Patrick also usefully distinguishes between solo blogs, collaborative or group blogs (like O&M), and multi-author blogs (professionally edited and produced, purely academic). O&M is partly academic, partly personal, but we have largely the same objectives as those outlined in Patrick’s post.

See also our recent discussion of academics and social media.

(HT: REW)

On Classifying Academic Research

| Peter Klein |

Should academic work be classified primarily by discipline, or by problem? Within disciplines, do we start with theory versus application, micro versus macro, historical versus contemporary, or something else? Of course, there may be no single “optimal” classification scheme, but how we think about organizing research in our field says something about how we view the nature, contributions, and problems in the field.

There’s a very interesting discussion of this subject in the History of Economics Playground blog, focusing on the evolution of the Journal of Economic Literature codes used by economists (parts 1, 2, and 3). I particularly liked Beatrice Cherrier’s analysis of the AEA’s decision to drop “theory” as a separate category. The Machlup–Hutchison–Rothbard exchange helps establish the context.

[T]he seemingly administrative task of devising new categories threw AEA officials, in particular AER editor Bernard Haley and former AER interim editor Fritz Machlup, into heated debates over the nature and relationships of theoretical and empirical work.

Machlup campaigned for a separate “Abstract Economic Theory” top category. At the time of the revision, he was engaged in methodological work, striving to find a third way between Terence Hutchison’s “ultraempiricism,” and the “extreme a priorism” of his former mentor, Ludwig Von Mises (see Blaug, ch.4). He believed it was possible to differentiate between “fundamental (heuristic) hypotheses, which are not independently testable,” and “specific (factual) assumptions, which are supposed to correspond to observed facts or conditions.” The former was found in Keynes’s General Theory, and the latter in his Treatise on Money, Machlup explained. He thus proposed that empirical analysis be classified independently, under two categories: “Quantitative Research Techniques” and “Social Accounting, Measurements, and Numerical Hypotheses” (e.g., census data, expenditure surveys, input-output matrices, etc.). On the contrary, Haley wanted every category to cover the theoretical and empirical work related to a given subject matter. In his view, separating them was impossible, even meaningless: “Is there any theory that is not abstract? And, for that matter, is there any economic theory worth its salt that is not applied,” he teased Machlup. Also, he wanted to avoid the idea that “class 1 is theory, the rest are applied … How about monetary theory, international trade theory, business cycle theory?” He accordingly designed the top category to encompass price theory, but also statistical demand analysis, as well as “both theoretical and empirical studies of, e.g., the consumption function [and] economic growth models of the Harrod-Domar variety,” among other subjects. He eschewed any “theory” heading, which he replaced with titles such as “Price system; National Income Analysis.” His scheme eventually prevailed, but “theory” was reinstated in the title of the contentious category.

It’s the Economics that Got Small

| Peter Klein |

Joe Gillis: You’re Norma Desmond. You used to be in silent pictures. You used to be big.

Norma Desmond: I *am* big. It’s the *pictures* that got small.

— Sunset Boulevard (1950)

John List gave the keynote address at this weekend’s Southern Economic Association annual meeting. List is a pioneer in the use by economists of field experiments or randomized controlled trials, and his talk summarized some of his recent work and offered some general reflections on the field. It was a good talk, lively and engaging, and the crowd gave him a very enthusiastic response.

List opened and closed his talk with a well-known quote from Paul Samuelson’s textbook (e.g., this version from the 1985 edition, coauthored with William Nordhaus): “Economists . . . cannot perform the controlled experiments of chemists and biologists because they cannot easily control other important factors.” While professing appropriate respect for the achievements of Samuelson and Nordhaus, List shared the quote mainly to ridicule it. The rise of behavioral and experimental economics over the last few decades — in particular, the recent literature on field experiments or RCTs — shows that economists can and do perform experiments. Moreover, List argues, field experiments are even better than using laboratories, or conventional econometric methods with instrumental variables, propensity score matching, differences-in-differences, etc., because random assignment can do the identification. With a large enough sample, and careful experimental design, the researcher can identify causal relationships by comparing the effects of various interventions on treatment and control groups in the field, in a natural setting, not an artificial or simulated one.

While I enjoyed List’s talk, I became increasingly frustrated as it progressed, and found myself — I can’t believe I’m writing these words — defending Samuelson and Nordhaus. Of course, not only neoclassical economists, but nearly all economists, especially the Austrians, have denied explicitly that economics is an experimental science. “History can neither prove nor disprove any general statement in the manner in which the natural sciences accept or reject a hypothesis on the ground of laboratory experiments,” writes Mises (Human Action, p. 31). “Neither experimental verification nor experimental falsification of a general proposition are possible in this field.” The reason, Mises argues, is that history consists of non-repeatable events. “There are in [the social sciences] no such things as experimentally established facts. All experience in this field is, as must be repeated again and again, historical experience, that is, experience of complex phenomena” (Epistemological Problems of Economics, p. 69). To trace out relationships among such complex phenomena requires deductive theory.

Does experimental economics disprove this contention? Not really. List summarized two strands of his own work. The first deals with school achievement. List and his colleagues have partnered with a suburban Chicago school district to perform a series of randomized controlled trials on teacher and student performance. In one set of experiments, teachers were given various monetary incentives if their students improved their scores on standardized tests. The experiments revealed strong evidence for loss aversion: offering teachers year-end cash bonuses if their students improved had little effect on test scores, but giving teachers cash up front, and making them return it at the end of the year if their students did not improve, had a huge effect. Likewise, giving students $20 before a test, with the understanding that they have to give the money back if they don’t do well, leads to large improvements in test scores. Another set of randomized trials showed that responses to charitable fundraising letters are strongly impacted by the structure of the “ask.”

To be sure, this is interesting stuff, and school achievement and fundraising effectiveness are important social problems. But I found myself asking, again and again, where’s the economics? The proposed mechanisms involve a little psychology, and some basic economic intuition along the lines of “people respond to incentives.” But that’s about it. I couldn’t see anything in these design and execution of these experiments that would require a PhD in economics, or sociology, or psychology, or even a basic college economics course. From the perspective of economic theory, the problems seem pretty trivial. I suspect that Samuelson and Nordhaus had in mind the “big questions” of economics and social science: Is capitalism better than socialism? What causes business cycles? Is there a tradeoff between inflation and unemployment? What is the case for free trade? Should we go back to the gold standard? Why do nations to go war? It’s not clear to me how field experiments can shed light on these kinds of problems. Sure, we can use randomized controlled trials to find out why some people prefer red to blue, or what affects their self-reported happiness, or why we eat junk food instead of vegetables. But do you really need to invest 5-7 years getting a PhD in economics to do this sort of work? Is this the most valuable use of the best and brightest in the field?

My guess is that Samuelson and Nordhaus would reply to List: “We are big. It’s the economics that got small.”

See also: Identification versus Importance

Rich Makadok on Formal Modeling and Firm Strategy

[A guest post from Rich Makadok, lifted from the comments section of the Tirole post below.]

Peter invited me to reply to [Warren Miller’s] comment, so I’ll try to offer a defense of formal economic modeling.

In answering Peter’s invitation, I’m at a bit of a disadvantage because I am definitely NOT an IO economist (perhaps because I actually CAN relax). Rather, I’m a strategy guy — far more interested in studying the private welfare of firms than the public welfare of economies (plus, it pays better and is more fun). So, I am in a much better position to comment on the benefits that the game-theoretic toolbox is currently starting to bring to the strategy field than on the benefits that it has brought to the economics discipline over the last four decades (i.e., since Akerlof’s 1970 Lemons paper really jump-started the trend).

Peter writes, “game theory was supposed to add transparency and ‘rigor’ to the analysis.” I have heard this argument many times (e.g., Adner et al, 2009 AMR), and I think it is a red herring, or at least a side show. Yes, formal modeling does add transparency and rigor, but that’s not its main benefit. If the only benefit of formal modeling were simply about improving transparency and rigor then I suspect that it would never have achieved much influence at all. Formal modeling, like any research tool or method, is best judged according to the degree of insight — not the degree of precision — that it brings to the field.

I can’t think of any empirical researcher who has gained fame merely by finding techniques to reduce the amount of noise in the estimate of a regression parameter that has already been the subject of other previous studies. Only if that improved estimation technique generates results that are dramatically different from previous results (or from expected results) would the improved precision of the estimate matter much — i.e., only if the improved precision led to a valuable new insight. In that case, it would really be the insight that mattered, not the precision. The impact of empirical work is proportionate to its degree of new insight, not to its degree of precision. The excruciatingly unsophisticated empirical methods in Ned Bowman’s highly influential “Risk-Return Paradox” and “Risk-Seeking by Troubled Firms” papers provide a great example of this point.

The same general principle is true of theoretical work as well. I can’t think of any formal modeler who has gained fame merely by sharpening the precision of an existing verbal theory. Such minor contributions, if they get published at all, are barely noticed and quickly forgotten. A formal model only has real impact when it generates some valuable new insight. As with empirics, the insight is what really matters, not the precision. (more…)

Tirole

| Peter Klein |

As a second-year economics PhD student I took the field sequence in industrial organization. The primary text in the fall course was Jean Tirole’s Theory of Industrial Organization, then just a year old. I found it a difficult book — a detailed overview of the “new,” game-theoretic IO, featuring straightforward explanations and numerous insights and useful observations but shot through with brash, unsubstantiated assumptions and written in an extremely terse, almost smug style that rubbed me the wrong way. After all, game theory was supposed to add transparency and “rigor” to the analysis, bringing to light the hidden assumptions of the old-fashioned, verbal models, but Tirole combined math and ad hoc verbal asides in equal measure. (Sample statement: “The Coase theorem (1960) asserts that an optimal allocation of resources can always be achieved through market forces, irrespective of the legal liability assignment, if information is perfect and transactions are costless.” And then: “We conclude that the Coase theorem is unlikely to apply here and that selective government intervention may be desirable.”) Well, that’s the way formal theorists write and, if you know the code and read wisely, you can gain insight into how these economists think about things. Is it the best way to learn about real markets and real competition? Tirole takes it as self-evident that MIT-style theory is a huge advance over the earlier IO literature, which he characterizes as “the old oral tradition of behavioral stories.” He does not, to my knowledge, deal with the “new learning” of the 1960s and 1970s, associated mainly with Chicago economists (but also Austrian and public choice economists) that emphasized informational and incentive problems of regulators as well as firms.

Tirole is one of the most important economists in modern theoretical IO, public economics, regulation, and corporate finance, and it’s no surprise that the Nobel committee honored him with today’s prize. The Nobel PR team struggled to summarize his contributions for the nonspecialist reader (settling on the silly phrase that his work shows how to “tame” big firms) but you can find decent summaries in the usual places (e.g., WSJ, NYT, Economist) and sympathetic, even hagiographic treatments in the blogosphere (Cowen, Gans). By all accounts Tirole is a nice guy and an excellent teacher, as well as the first French economics laureate since Maurice Allais, so bully for him.

I do think Tirole-style IO is an improvement over the old structure-conduct-performance paradigm, which focused on simple correlations, rather than causal explanations and eschewed comparative institutional analysis, modeling regulators as omniscient, benevolent dictators. The newer approach starts with agency theory and information theory — e.g., modeling regulators as imperfectly informed principals and regulated firms as agents whose actions might differ from those preferred by their principals — and thus draws attention to underlying mechanisms, differences in incentives and information, dynamic interaction, and so on. However, the newer approach ultimately rests on the old market structure / market power analysis in which monopoly is defined as the short-term ability to set price above marginal cost, consumer welfare is measured as the area under the static demand curve, and so on. It’s neoclassical monopoly and competition theory on steroids, and hence side-steps the interesting objections raised by the Austrians and UCLA price theorists. In other words, the new IO focuses on more complex interactions while still eschewing comparative institutional analysis and modeling regulators as benevolent, albeit imperfectly informed, “social planners.”

As a student I found Tirole’s analysis extremely abstract, with little attention to how these theories might work in practice. Even Tirole’s later book with Jean-Jacques Laffont, A Theory of Incentives in Procurement and Regulation, is not very applied. But evidently Tirole has played a large personal and professional role in training and advising European regulatory bodies, so his work seems to have had a substantial impact on policy. (See, however, Sam Peltzman’s unflattering review of the 1989 Handbook of Industrial Organization, which complains that game-theoretic IO seems more about solving clever puzzles than understanding real markets.)

Recent Comments