Posts filed under ‘Methods/Methodology/Theory of Science’

History of Economic Thought Revival?

| Peter Klein |

More on the AEA meetings: I didn’t attend enough sessions to get a feel for overall directions and trends, but David Warsh (via Lynne) detected a revival of interest in the history of economic thought:

Interesting, too, was the undercurrent to be found in many conversations of interest in the history of economics itself. History of economic thought — or history of science, if you prefer — is a subject that has all but disappeared in the last thirty years as a topic of major research interest or as a subject of courses in top graduate schools — precisely the period of economic triumphalism.

I certainly can’t prove a resurgence of interest in economics past as it bears upon the present, or even document it beyond a few suggestive facts. The history of thought sessions in the meetings proceeded in their customary grooves — a retrospective on the rational expectations assumption fifty years after it was introduced, Irving Fisher’s The Purchasing Power of Money at one hundred.

But there were portents of change in at least one session on “rethinking the core” of graduate education. James Heckman, of the University of Chicago, endorsed the possibility of restoring to the graduate curriculum high-level elective courses in the history of economic thought. “People in the past were smart and they made mistakes and had insights,” he said afterwards. “We have sometimes forgotten the insights and we have sometimes repeated the same mistakes.”

An interesting challenge to what Murray Rothbard called the “Whig theory” of science, the view that dominates contemporary research in most of the social sciences.

Why Do Bad Ideas Spread? Luzzetti and Ohanian on the Rise and Fall of Keynesianism

| Peter Klein |

O&M generally takes a dim view of Keynesian economics. And yet Keynesianism triumphed after WWII and, while mostly dormant among academics from the 1970s to the 2000s, made a sweeping comeback over the last 2-3 years. If we anti-Keynesians are so smart, why is Keynesianism so popular?

This is an important question for the history, philosophy, and sociology of science, and we’ve addressed it before. Keynesianism appeals to fine-tuners, is easily formalized, appeared to “work” during and after WWII, has a “progressive” and “scientific” veneer, and justifies policies that governments have long championed (but all serious economists opposed).

Matthew Luzzetti and Lee Ohanian propose a similar narrative in their new NBER paper, “The General Theory of Employment, Interest, and Money After 75 Years: The Importance of Being in the Right Place at the Right Time.” In a nutshell, Keynesianism told people what they wanted to hear, gave them hope that the “new” economics could cure the Depression and bring long-term prosperity, worked well with the new empirical methods appearing in the 1940s and 1950s, and seemed consistent with observation. By the 1970s, however, the situation became almost reversed, and Keynesianism was dumped by the research community. Here’s an excerpt from the introduction: (more…)

Friedman, 1953

| Lasse Lien |

Some things just cannot be ignored. A prime example is Friedman’s 1953 essay “The Methodology of Positive Economics.” As most O&M readers will know Friedman’s key claim is that a theory should be judged by its predictive accuracy, not the realism of its assumptions. On the contrary, a theory that makes dramatic (i.e., unrealistic) simplifying assumptions and still generates good predictive results is considered a better theory than a more complex (i.e., realistic) theory with the same predictive performance. Few texts, and surely no other text on economic methodology, is loved, hated, and cited by so many. In much of mainstream economics Friedman’s position — or some version thereof — is routinely relied upon. For example, imagine defending game theory on the realism of its assumptions.

In 2003, on the 50 year anniversary of its publication, a conference was held at the Erasmus University in Rotterdam where the legacy of this paper was discussed. In 2009 a book was published containing a collection of papers from this conference, edited by Uskali Mäki. A link to this book can be found here, and a recently published (harsh) review of the book can be found here. Note that the book review makes interesting reading even if you do not intend to read the book (but have read the original essay).

If this is your cup of tea, you might also like this remarkable paper.

History of Agricultural Research to 1945

| Peter Klein |

The importance of agricultural research in the intellectual history of science should be self-evident. Justus Liebig (1803-1873) was a key figure in both the development of laboratory methodology and agricultural science. Gregor Mendel’s (1822-1884) famous experiments were in plant breeding. Louis Pasteur’s (1822-1895) most celebrated work was on the cattle disease, anthrax. William Bateson (1861-1926), who coined the term genetics, was the first director of the John Innes Horticultural Institution in London, 1910-1926. Statistician, geneticist, and eugenics proponent R. A. Fisher (1890-1962) was employed by the Rothamsted Experimental Station, 1919 to 1933 (and temporarily relocated there from 1939 to 1943). Interwar and postwar virologists and molecular biologists did a great deal of work on the economically destructive tobacco mosaic virus.

From a very informative post at the history-of-science blog Ether Wave Propaganda (via Randy). In economics and management we’d have to add large swathes of production economics and risk-management research, the early papers in agency and contract theory dealing with sharecropping and land tenure, Grilliches’s work on hybrid corn, and much more. What else would you put on the list?

The Viability of the Survivor Principle

| Lasse Lien |

The survivor principle holds that the competitive process weeds out inefficient firms, so that hypotheses about efficient behavior can be tested by observing how firms actually behave. This principle underlies a large body of empirical work in strategy, economics, and management. But do competitive markets actually display what is efficient? Can we safely make the shortcut of hypothesizing that, say X, is efficient, and then test that claim by observing whether firms actually do X? Surely the competitive process is somewhat noisy and imperfect. While we all know anecdotes that seem to disprove the SP it may still be a reasonable approximation in the aggregate. This astonishing paper tests the validity of the SP in the context of corporate diversification.

The Diversity of Strategic Management Research

| Peter Klein |

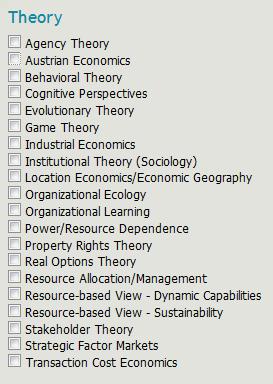

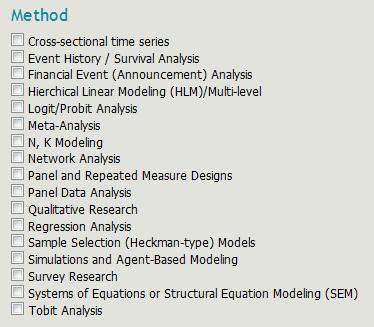

In my graduate class this morning we were discussing the diversity of theories and approaches in strategic management research when a useful illustration came to mind. I recently registered as a reviewer for the upcoming SMS conference and, as requested, indicated my areas of interest and expertise. The lists for “Theory” and “Method,” reproduced below, are instructive. I mean, can you imagine such lengthy lists for the AEA meeting or a conference in accounting or finance? (OK, perhaps still too short for some. . . .)

Entrepreneurial Ability as a Latent Variable

| Peter Klein |

It’s the weekend after Thanksgiving, so naturally I’m thinking about residuals — not leftover turkey and cranberry sauce, but entrepreneurial characteristics and behaviors as residuals, as latent variables that leave traces in outcomes that we can’t otherwise explain. I’ve argued before that common measures of entrepreneurship such as startups, self-employment, patents, venture funding, etc., while related to entrepreneurship, are epiphenomena, manifestations of an underlying, unobservable attribute or behavior such as judgment, alertness, innovation, or adaptation. (These are difficult, if not impossible, to measure directly; asking survey respondents, for instance, “How many opportunities did you identify this month?” is not quite the same thing as measuring Kirznerian alertness!) In a recent musing on strategic entrepreneurship I suggested that

many of the entrepreneurial capabilities we’re really interested in are latent, and best captured as residuals — e.g., something heritable and not explainable by other observables. . . . Mike Wright talked [at the CBS strategic entrepreneurship conference] about mobility, both across firms or projects (habitual entrepreneurs, spin-outs) and across countries (immigrant and returnee entrepreneurs, transnational entrepreneurs). From the perspective of research design, some of these movements may be useful for isolating the “entrepreneurial” essence, such as it is.

Seth Carnahan, Rajshree Agarwal, and Ben Campell have an interesting new paper, “The Effect of Firm Compensation Structures on the Mobility and Entrepreneurship of Extreme Performers,” that takes this kind of approach, measuring entrepreneurial ability as the residual in a wage regression. Most of the variation in wages can be explained by age, experience, race, gender, education, and other observables; what remains is partly measurement error, but can also include a latent ability parameter. Seth, Rajshree, and Ben use this parameter, along with the wage structure of an employee’s existing firm, to explain which employees tend to leave to join new firms, particularly startups. Check it out!

WEIRD Science

| Nicolai Foss |

It seems to be rather generally accepted that the Gold Standard of empirically-based science is the randomized experiment. Mosts economists and management scholars subscribe to this view, although its critics include notables like James Heckman (here). Arguably, the greatest badge of honor that one can aspire to nowadays as an economist (let’s forget about management scholars here ;-)) is to publish an experimentally-based paper in Nature or Science. However, one thing is the method of randomized experiments per se; quite another thing is the actual design of such experiments in social science and psychology.

In a recent paper, “The Weirdest People in the World,” Joseph Henrich, Steven Heine, and Ara Norenzayan point out that most designs involve samples drawn entirely from Western, Educated, Industrialized, Rich, and Democratic (WEIRD) societies, in practice often first-year students.

Of course, any serious experimental paper should be forthcoming about potential problems of external and ecological validity. The problem is certainly not neglected; in fact, some journals ban papers based on experiments involving students. However, the point of the Henrich et al. paper is to document how massive the problem really is in terms of the extremely widespread use of samples drawn from a total outlier population, namely WEIRD people and the sweeping conclusions drawn from experiments using WEIRD subjects. To establish this they compare to non-WEIRD samples. They end their paper by discussing what may be done in terms of practical research heuristics and research policy with respect to dealing with generalizability.

Here is the journal version of the paper (as well as various interesting comments). And here is the working paper. Warning: The Intro may not be for the faint of heart.

Miscellaneous Data and Measurement Links

| Peter Klein |

- Does Facebook data mining count as human subjects research? Some university IRBs apparently think so, even if the research uses only publicly posted profile information.

- SSRN’s Gregg Gordon explains the importance of knowing what we know we don’t know.

- Forget citation counts and PoP rankings: Do you know your personal social-media influence score? (via Cliff)

- Here’s Robert Merton’s essay on Lord Kelvin’s dictum. (Frank Knight’s alleged interpretation: “If you can’t measure, measure anyway.”)

Seven Deadly Sins of Contemporary Quantitative Political Analysis

| Peter Klein |

The rational-choice revolution in political science — universally acknowledged and generally respected, though not always loved — has let to an explosion of quantitative empirical research (making political science, like some strands of sociology, look more and more like neoclassical economics). Philip Schrodt warns, however, against these seven deadly sins:

- Kitchen sink models that ignore the e ects of collinearity;

- Pre-scienti c explanation in the absence of prediction;

- Reanalyzing the same data sets until they scream;

- Using complex methods without understanding the underlying assumptions;

- Interpreting frequentist statistics as if they were Bayesian;

- Linear statistical monoculture at the expense of alternative structures;

- Confusing statistical controls and experimental controls.

The economics literature is somewhat better at 4-7, though clearly susceptible to 1 and 3. (I’m not a logical positivist so 2 isn’t a sin for me.) In any case, this paper is worth reading, particularly for graduate students across the social sciences.

Here are commentaries by Andrew Gelman and Matt Blackwell. Oh, and Schrodt maintains that “[t]he answer to these problems is solid, thoughtful, original work driven by an appreciationof both theory and data. Not postmodernism.” Take that, performativitarians! The paper also includes some historical and philosophical perspective, with “a review of how we got to this point from the perspective of 17th through 20th century philosophy of science, and . . . suggestions for changes in philosophical and pedagogical approaches that might serve to correct some of these problems.”

Mirowski on Backhouse and Fontaine, eds., The History of the Social Sciences since 1945

| Peter Klein |

I enjoyed Philip Mirowski’s first book, though I find his more recent stuff increasingly tendentious and repetitive. Still, a Mirowski review of Backhouse and Fontaine, eds., The History of the Social Sciences since 1945 (Cambridge, 2010) is worth a read. Interesting bit on organizational structure:

The historical generalization overlooked by the editors is that “interdisciplinary” social science units shoehorned into postwar university structures almost uniformly failed, whereas those founded as freestanding think tanks, from RAND to American Enterprise Institute to Cato and the Manhattan Institute, all persevered and succeeded. This is true even for the odd case of Carnegie GSIA, which became the model for other business schools across the nation, but only upon dispensing with the original interdisciplinary structures initially promoted by Herbert Simon (himself then exiled to a Department of Psychology). The lesson may be that the postwar American research university could not sustain true interdisciplinarity in social science inquiry, but that military and corporate sponsors of the think tanks could manage it, but only by yoking it to a format that enforced unquestioned responsiveness to the whims of the funders.

A familiar point of course to students of entrepreneurship and innovation, and yet another reason to suspect that innovation in higher education is more likely to come from outsiders (e.g., the notorious for-profit institutions) than incumbents.

Survivor Bias: WW2 Edition

| Lasse Lien |

During World War 2 the British Royal Air Force (and Navy) pioneered the use of empirical and statistical analysis to improve performance — laying the foundation for the field we now know as Operations Research.

One fascinating anecdote is how these pioneers used data on damage from German air defense fire. The RAF collected large amounts of data on exactly where returning aircraft had received damage. The intuitive recommendation would be to reinforce the aircraft were the data indicated they took the most damage. However, realizing that they only had data from surviving aircraft, the OR group under leadership of Patrick Blackett recommended that they reinforce the aircraft in the sections where no damage was recorded in the data. Clever chaps, I dare say.

Using Content Analysis to Measure Scholarly Impact

| Peter Klein |

Two Princeton computer scientists have developed an algorithm for measuring scholarly impact based on content analysis, not citation data. Here’s the paper, and here’s a summary. I pay attention to impact factors (not as closely as some people) but am open to alternative measures — particularly any that might make me look better.

The Pretense-of-Knowledge Syndrome

| Dick Langlois |

Has Ricardo Caballero been reading Hayek (or maybe Brian Loasby)?

In this paper I argue that the current core of macroeconomics — by which I mainly mean the so-called dynamic stochastic general equilibrium approach — has become so mesmerized with its own internal logic that it has begun to confuse the precision it has achieved about its own world with the precision that it has about the real one. This is dangerous for both methodological and policy reasons. On the methodology front, macroeconomic research has been in “fine-tuning” mode within the local-maximum of the dynamic stochastic general equilibrium world, when we should be in “broad-exploration” mode. We are too far from absolute truth to be so specialized and to make the kind of confident quantitative claims that often emerge from the core. On the policy front, this confused precision creates the illusion that a minor adjustment in the standard policy framework will prevent future crises, and by doing so it leaves us overly exposed to the new and unexpected.

Econometrics Quote of the Day

| Peter Klein |

When I read empirical papers, I see many demonstrations of the IQ levels of economists, but I see the study of very few genuine empirical issues. I hardly see any intellectual capital at risk. As a result, I think we have much less progress as a science than we would if we made a serious effort to identify clearly some real issues.

That’s an Ed Leamer classic from 1988. The source is here. Related: “Puzzles or Problems?”

The Vromen/Abell-Felin-Foss Debate

| Nicolai Foss |

As readers of this blog will know (probably ad nauseam), Teppo Felin and I have been engaged over the last five years or so in a minor crusade in favor of building micro-foundations for, particularly, strategic management research (e.g., this paper with Peter Abell). I think it is fair to say that we have had some success with this project, as talk of micro-foundations has now become a part of contemporary strategic management discourse.

One of our critical targets have been the extant literature on capabilities and routines which we argue work with collective-level constructs that have no clear micro-foundations. We make use of the famous Coleman “bathtub” diagram to explicate these ideas.

In a paper, “Micro-foundations in strategic management: Squaring Coleman’s diagram,” that just been published online in Erkenntnis, Jack Vromen, criticizes our reading of the routines and capabilities literature and, in particular, our use of the Coleman diagram to explicate our criticism. Basically, he argues that we are confused about the key distinction between constitutive and causal relations. Here is our Reply. The abstracts are copied in below. (more…)

Mises Quote of the Day

| Peter Klein |

Mises is known for his uncompromising defense of apriorism in economics, yet he began his career as a historicist, trained by Karl Grünberg, a Marxist and prominent member of the German Historical School. (Mises’s first publications were on land reform in his native Galicia and child-labor laws in Austria, both tediously empirical and inductive.) It was only later, after encountering Menger’s Principles, that Mises turned to social theory.

One of this week’s Mises Dailies features an excerpt from Mises’s 1957 book Theory and History and I can’t resist passing along this nugget, which is hopelessly out of touch with today’s enthusiasm for all things experimental:

[H]istorical experience is always the experience of complex phenomena, of the joint effects brought about by the operation of a multiplicity of elements. Such historical experience does not give the observer facts in the sense in which the natural sciences apply this term to the results obtained in laboratory experiments. (People who call their offices, studies, and libraries “laboratories” for research in economics, statistics, or the social sciences are hopelessly muddle-headed.)

Mises isn’t talking about the literal laboratories used by today’s experimental economists, but the casual use of such scientistic jargon when collecting and analyzing non-experimental data, whether or primary or secondary. (He likewise rejected the language of “hypothesis testing” and the like when applied to social science.) Anyway, agree or disagree, you have to admit there are a lot of hopelessly muddle-headed people on university campuses.

Misbehavioral Antitrust

| Peter Klein |

I suggested earlier that behavioral economics could use a dose of comparative institutional analysis. The New Paternalists are very worried about various biases and forms of “irrationality” on the part of consumers, managers, entrepreneurs, investors, etc. but have little or nothing to say about the rationality of regulators, legislators, judges, and other non-market actors. Josh Wright and Judd Stone offer a parallel critique of behavioral economics applied to antitrust law: the behavioralists focus on presumed bias and irrationality on the part of incumbents, while largely ignoring the cognitive attributes of rivals and potential entrants. Josh and Judd propose an “irrelevance theorem”: “If one assumes a given behavioral bias applies to all firms — both incumbents and entrants — behavioral antitrust policy implications do not differ from those generated by the rational choice models of mainstream antitrust analysis.”

Addendum: Steve Horwitz made the comparative institutional argument in an earlier post that I unfortunately missed.

The Peer-Review Fetish

| Peter Klein |

I respect peer review as much as the next person and have done my share of publishing in peer-reviewed outlets. But I question the belief, expressed often in academic, media, and policy circles, that “not peer reviewed” means “worthless” and “peer reviewed” means “should be accepted without question.” (A corollary belief is that “funded by a private foundation or company” means “biased” while “funded by a government grant” means “neutral.”) In practice, the distinctions are not nearly so clean.

My thoughts on this were triggered by a revealing statement from Ronald Coase, quoted by Josh Gans and George Shepherd in their study of famous economics papers that were initially rejected, about his limited experience with peer review: “I have never found any difficulty in getting my articles published. I have either published in house journals (e.g. Economica) or the article was written as a result of a request (e.g. for a conference) and publication was assured.” Certainly no one would discount the importance Coase’s 1937 and 1960 papers because they weren’t rigorously peer reviewed. (Can you imagine the inane referee remarks that “The Problem of Social Cost” would have generated?) More generally, consider the Journal of Law and Economics during Coase’s editorship in the 1960s and 1970s — the high-water mark of the JLE‘s influence. Or, for that matter, Public Choice under Gordon Tullock, the JPE under George Stigler, or the Journal of Libertarian Studies under Murray Rothbard. These were edited somewhat unevenly, led by charismatic and strong-willed editors with idiosyncratic tastes, yet have been vastly influential in their respective fields.

Peer review serves a useful function and probably improves the quality of published output, on average. But let’s not make a fetish of it.

Recent Comments